IMDA flags serious safety lapses on X and TikTok, places both under enhanced supervision

The Infocomm Media Development Authority (IMDA) issued Letters of Caution to X and TikTok, it said in a press release on Tuesday (31 March).

The statutory board issued the letters to X and TikTok due to “serious weaknesses in their measures to proactively detect and remove child sexual exploitation and abuse material (CSEM) and terrorism content, respectively”.

IMDA added that the two platforms are now under Enhanced Supervision.

Source: Anton Nita’s Images on Canva, for illustrative purposes only

Spike in harmful content detected on both platforms

IMDA found a sharp rise in child sexual exploitation and abuse material (CSEM) on X.

According to IMDA, CSEM cases stemming from X increased by 120%. There were 73 cases in 2025, up from 33 in 2024.

All 73 cases were linked to Singapore and involved content sharing, links, and self-generated material.

On TikTok, IMDA detected 17 cases of terrorism content in 2025.

This marks the first time such cases were identified on Singapore-based TikTok accounts.

Source: Harvard Law Today, for illustrative purposes only

Content removed only after IMDA flagged cases

IMDA said all 73 CSEM cases on X violated the platform’s own policies. However, they were only removed after IMDA flagged them.

The authority had previously shared analysis and indicators with X in 2024.

Despite this, the issues persisted.

On TikTok, some terrorism-related content was reported through its in-app system.

TikTok initially assessed that the content did not violate its guidelines.

Similar to X, the content was only taken down after IMDA intervened.

Platforms must improve detection systems

Both X and TikTok have accepted the findings, IMDA said in its press release.

They have committed to improving their systems, including enhancing automated detection using AI and additional signals.

IMDA will require regular updates on their progress, and the two platforms must also submit supporting data by 30 June 2026.

This will demonstrate whether their measures are effective.

Further action possible if issues not resolved

IMDA said it will not hesitate to take stronger action if needed.

This includes potential regulatory measures under the Broadcasting Act.

The authority stressed that harmful content such as CSEM and terrorism material are “very egregious harms”.

Wider gaps found across social media platforms

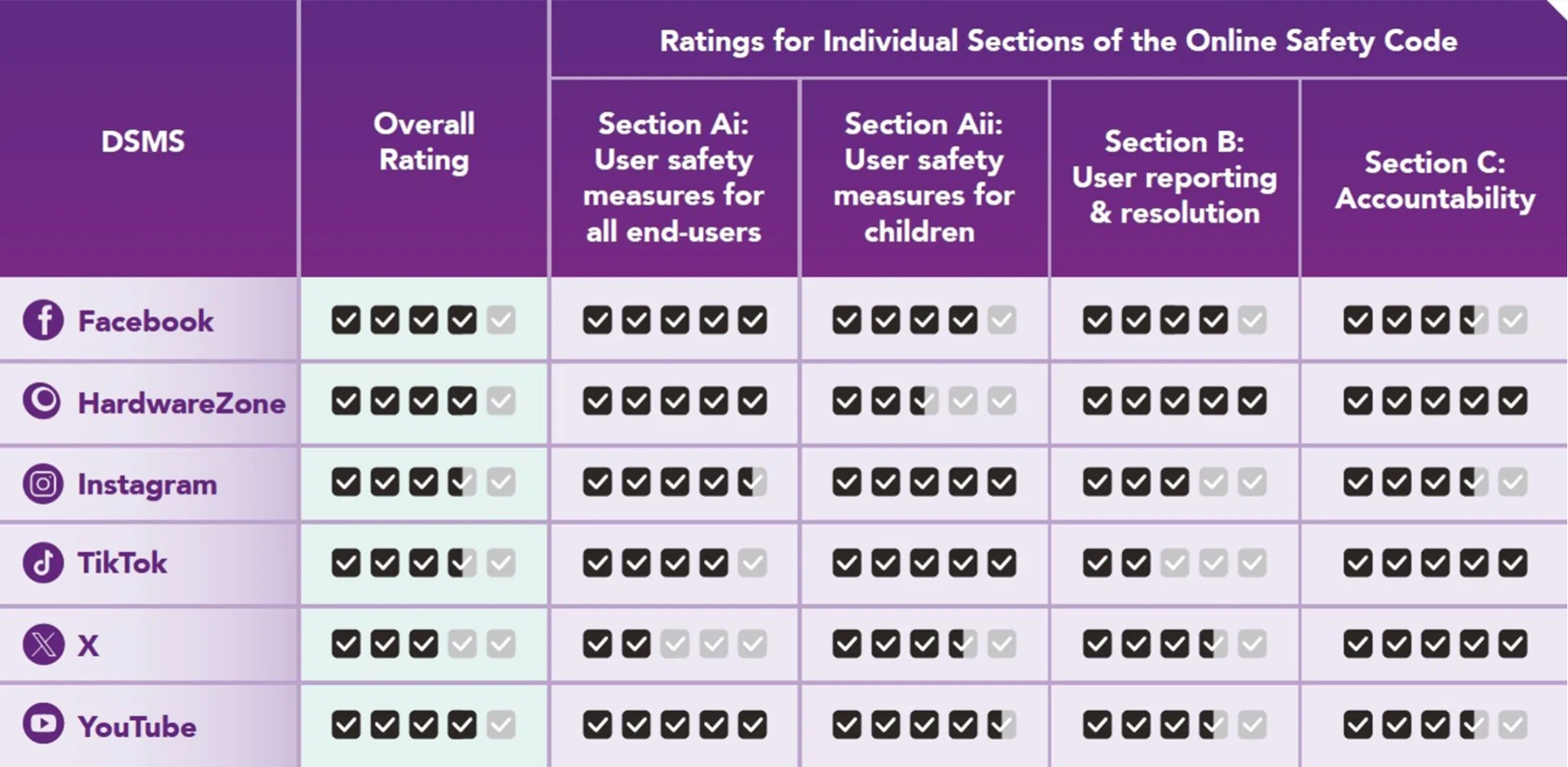

The findings were part of IMDA’s 2025 Online Safety Assessment Report.

The report evaluates how platforms manage the risks of harmful content.

While authorities noted improvements, gaps remain.

Source: IMDA website

Some platforms still lack effective child safety measures.

IMDA also flagged Facebook, YouTube and HardwareZone for weaknesses.

These gaps could expose children to age-inappropriate content.

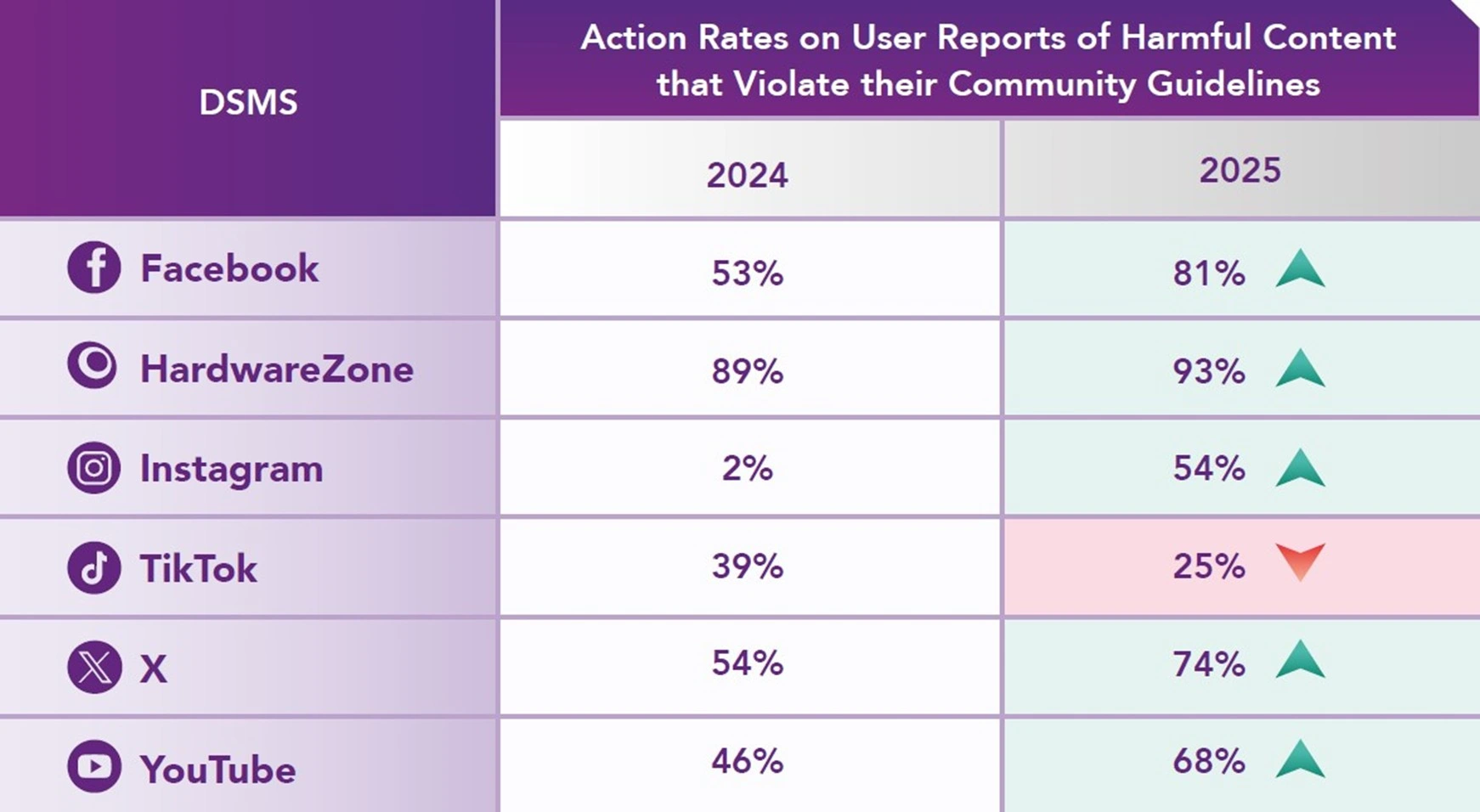

TikTok saw decline in action rate of handling user reports

Most platforms improved their handling of user reports, with action rates ranging from 54% to 93% in 2025.

This is up from around 50% or less in 2024.

However, TikTok was the only platform to see a decline.

Source: IMDA website

Its action rate dropped from 39% in 2024 to 25% in 2025.

IMDA said that protecting users, especially children, is its main priority and that it will continue working with platforms while holding them accountable.

The authority is also reviewing further safeguards.

This includes extending age assurance measures to social media services.

IMDA will announce more details later this year.

Also read: The Online Citizen seeks donations to pay S$10.4K POFMA correction notice in ST

The Online Citizen seeks donations to pay S$10.4K POFMA correction notice in ST